Roni Sengupta

Assistant Professor · Department of Computer Science · UNC Chapel Hill

Spatial & Physical Intelligence (SPIN) LabI lead the SPIN Lab at UNC Chapel Hill. My research lies at the intersection of Computer Vision and Computer Graphics, mainly centered around 3D Vision and Computational Photography. My lab is particularly interested in developing AI techniques for understanding spatial and physical properties from images and videos - geometry, motion, material reflectance, material deformation properties, and lighting (Inverse Physics). We solve Inverse Physics to advance applications in Immersive Media (AR/VR, telepresence, content creation), Healthcare (medical image computing, surgical robotics), Robotics and Physical Sciences (material design and engineering).

Prior to UNC, I was a Postdoctoral Research Associate at University of Washington, working with Prof. Steve Seitz, Prof. Brian Curless, and Prof. Ira Kemelmacher-Shlizerman in the UW Reality Lab / GRAIL (2019–2022). I completed my Ph.D. at University of Maryland – College Park (2013–2019), advised by Prof. David Jacobs, and my undergraduate degree in Electronics & Tele-Communication Engineering from Jadavpur University, Kolkata, India (2009–2013). I have also spent time working with researchers at NVIDIA Research, Snapchat Research, The Weizmann Institute of Science, and TU Dortmund.

Awards & Honors

Research

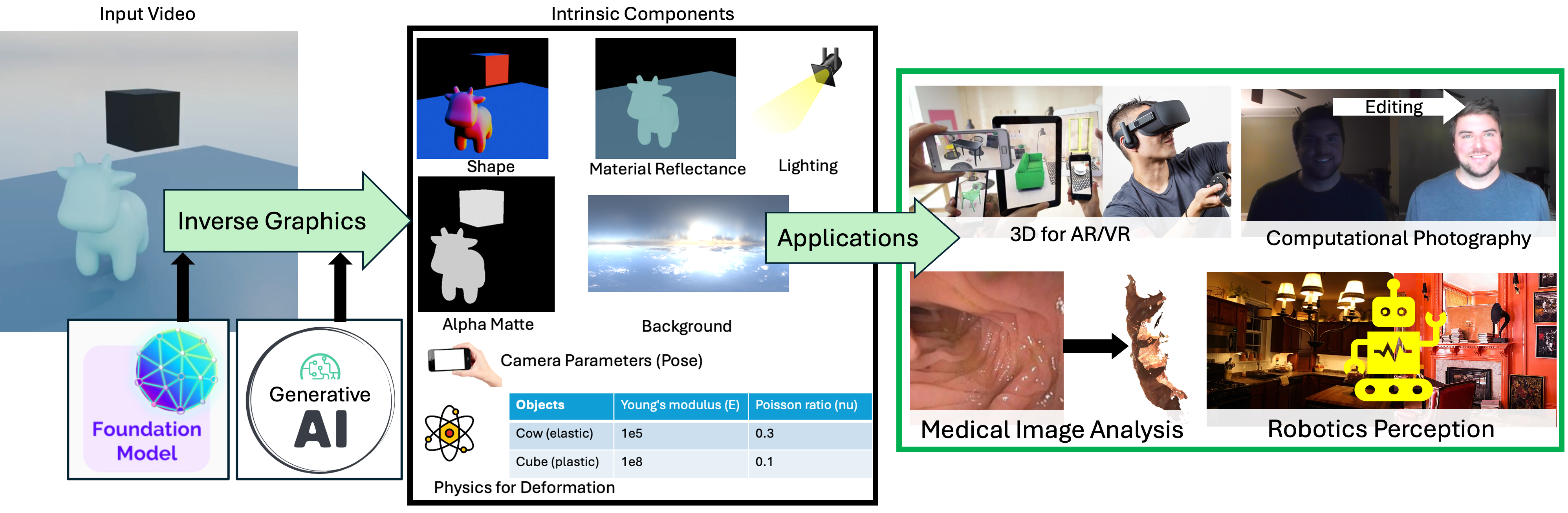

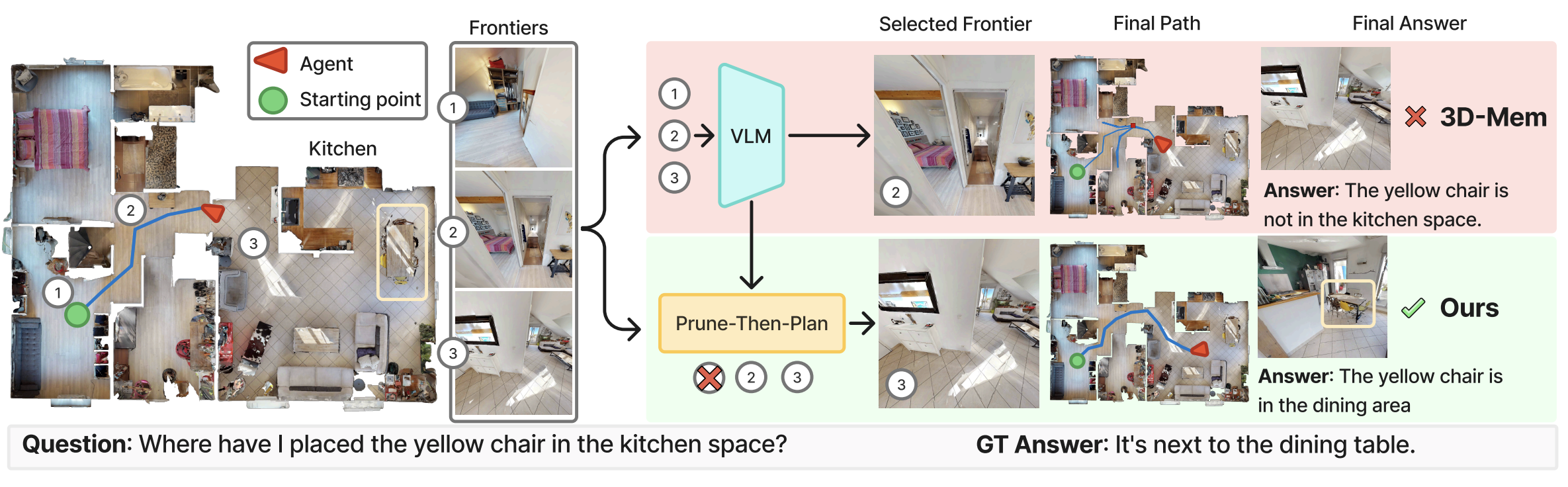

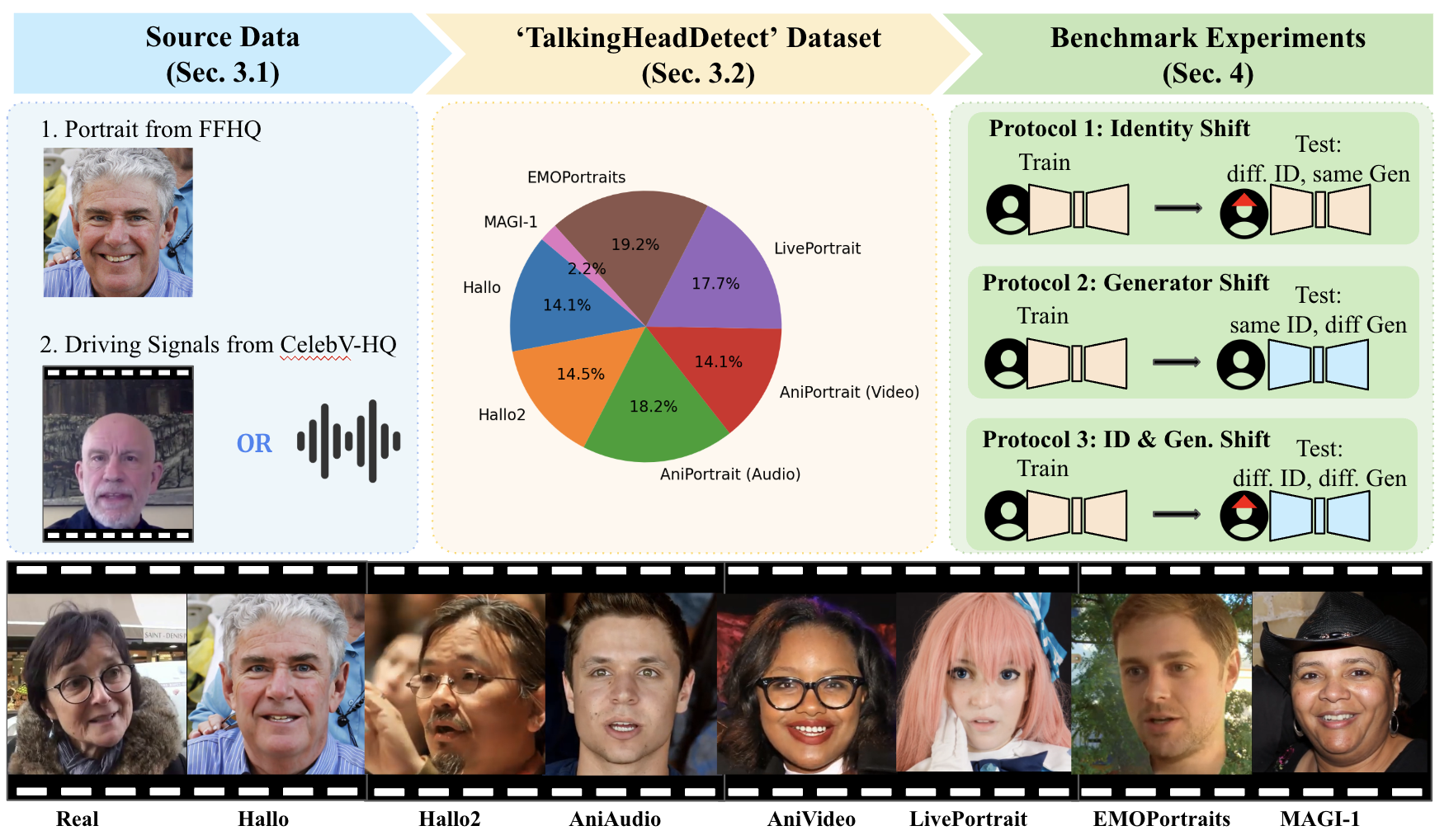

Our research sits at the intersection of Computer Vision and Computer Graphics, with a focus on 3D Vision and Computational Photography. We develop AI techniques that enable machines to understand the spatial and physical structure of the world from visual data.

Our work spans two complementary directions: explicit estimation of spatial/physical properties through inverse problems leveraging foundation AI models, and implicit manipulation of these properties using generative AI. We advance both fundamental methods and practical applications across VFX, AR/VR, robotics, telepresence, and healthcare.

Currently our lab's efforts center around four synergistic research themes:

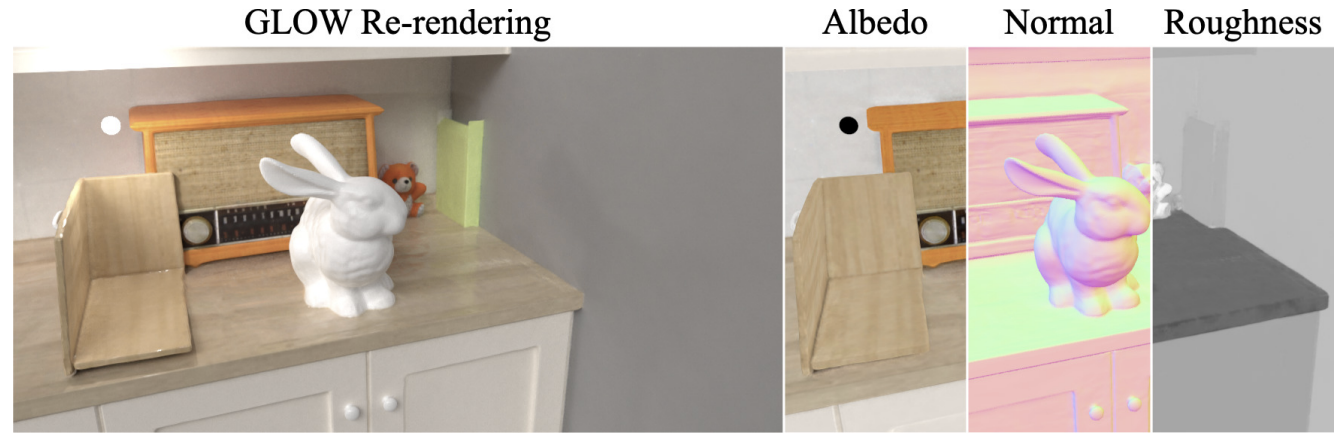

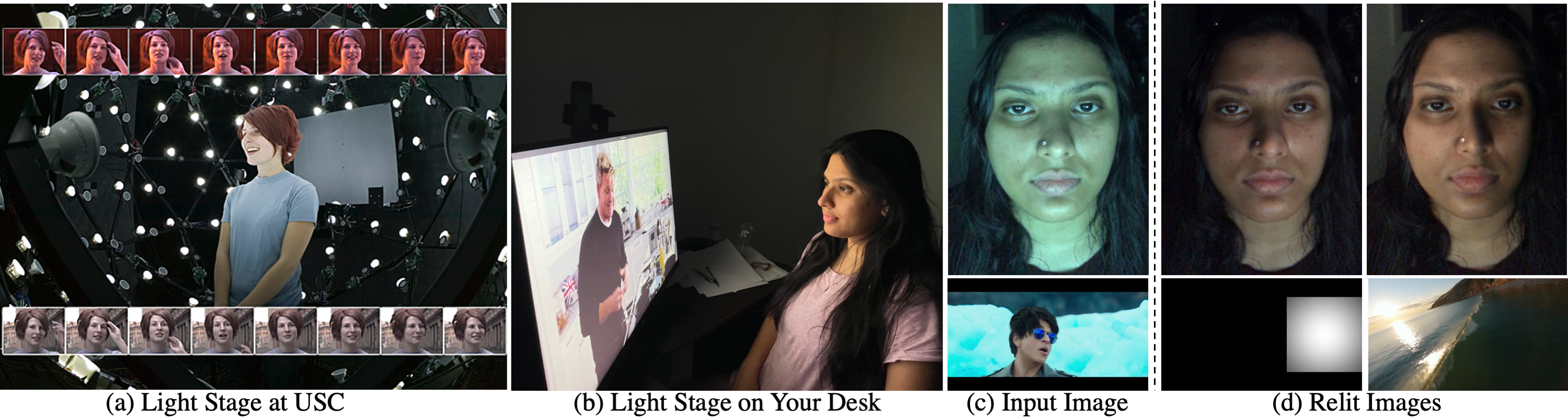

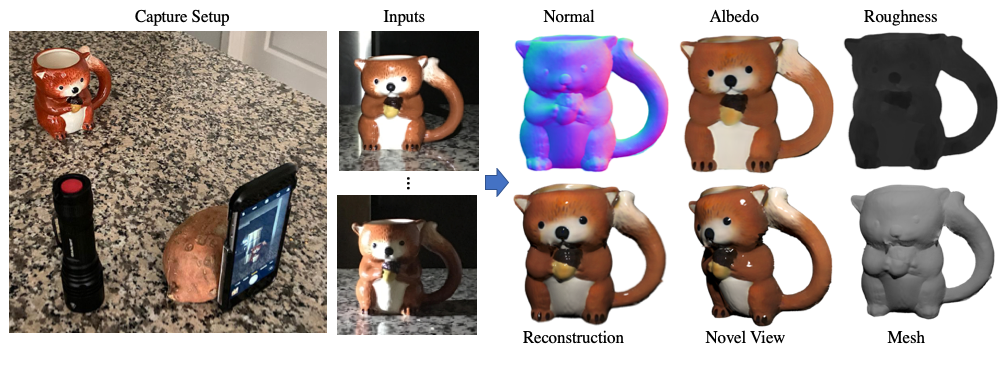

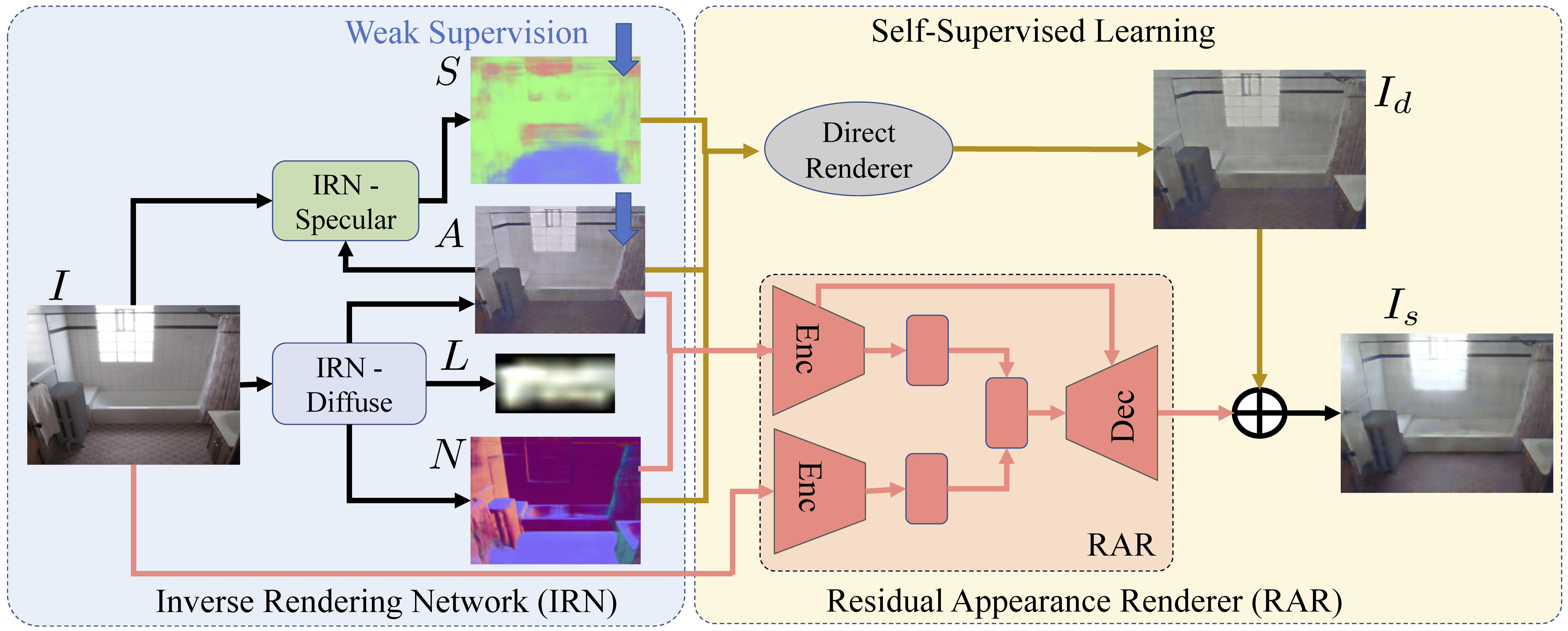

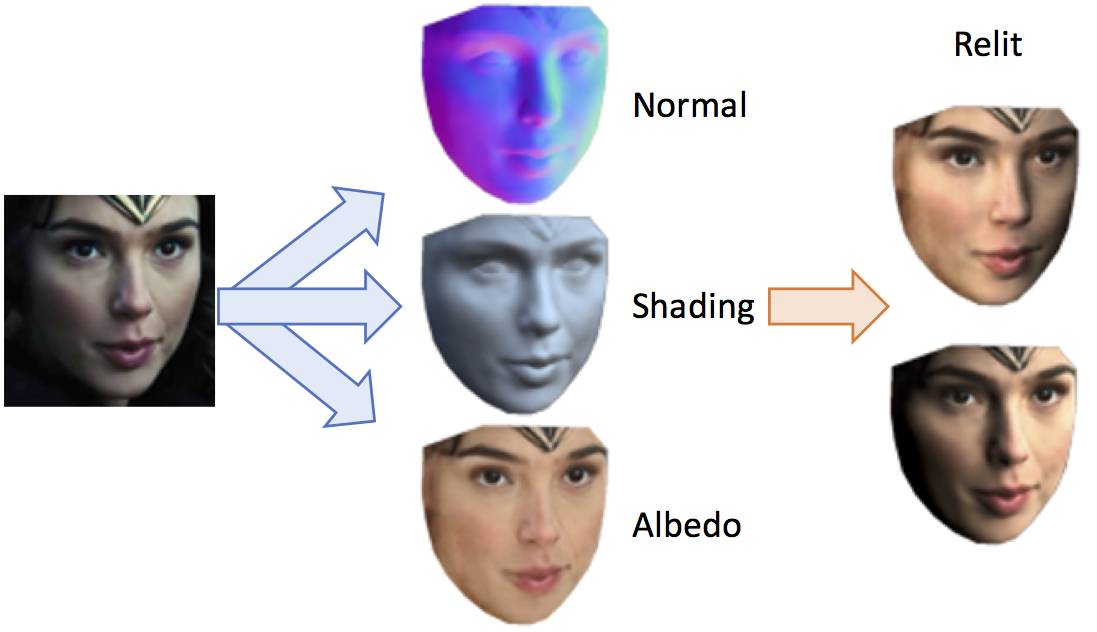

Inverse Rendering

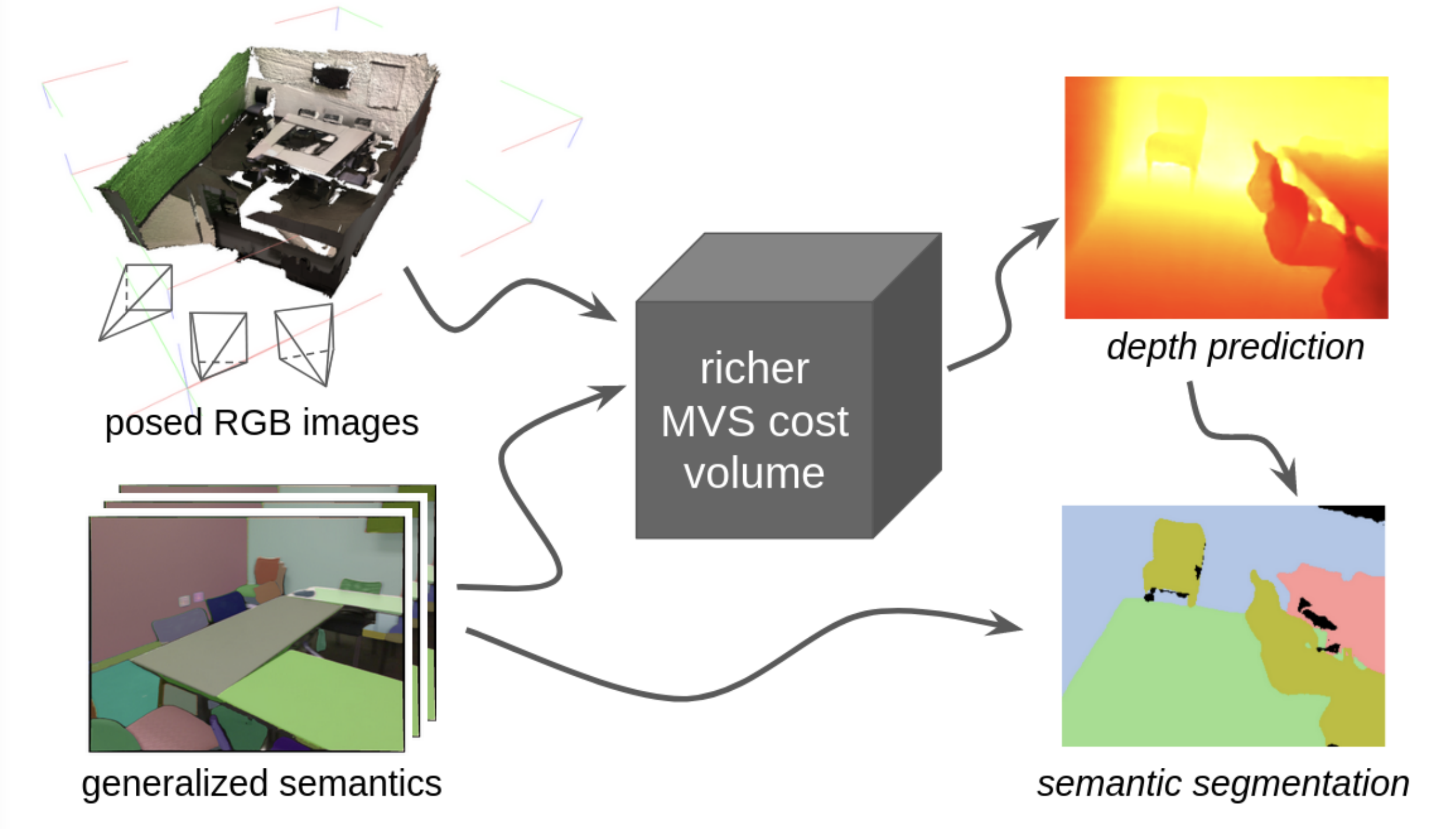

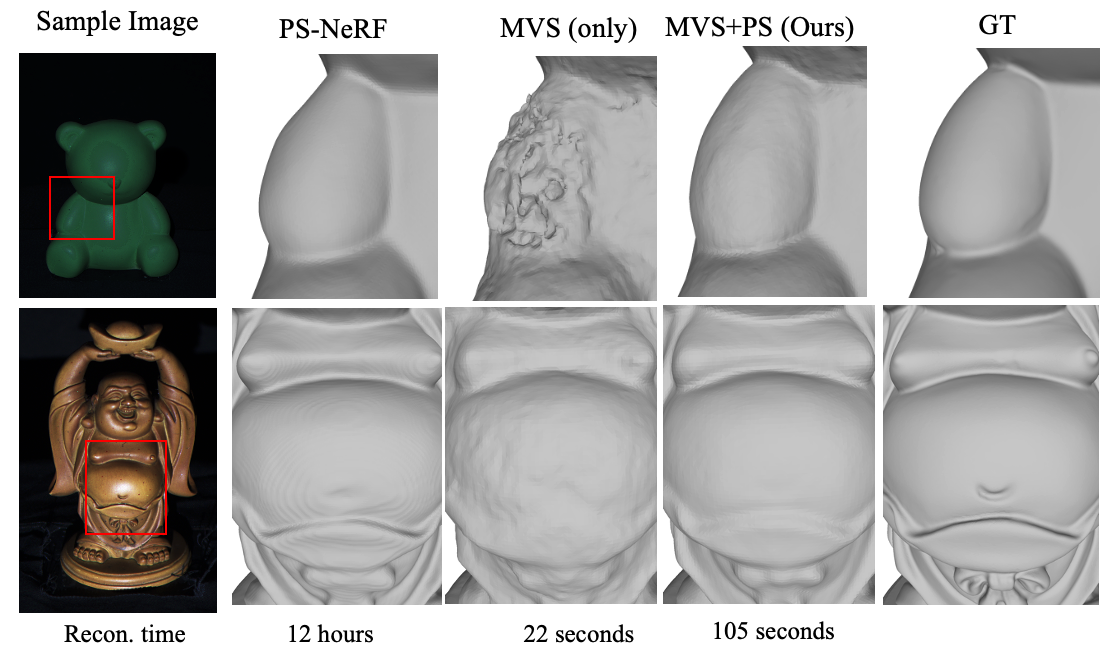

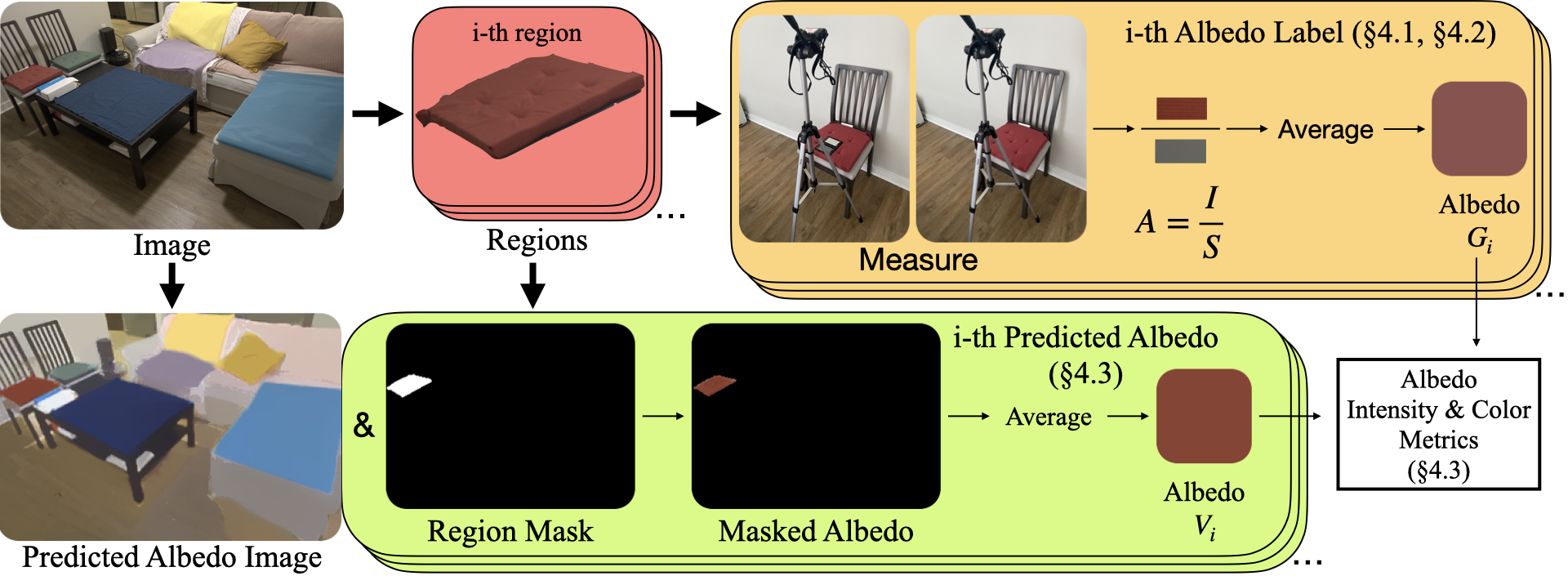

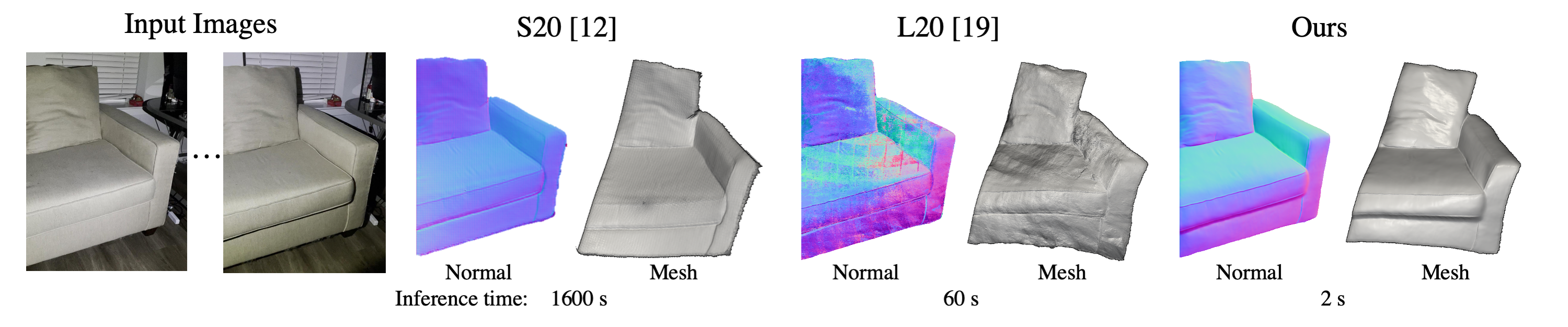

Inverse rendering seeks to recover the physical properties of a scene — geometry, material reflectance, and lighting — from images or videos. We develop explicit estimation methods using neural networks and implicit understanding frameworks that enable intuitive manipulation of disentangled scene components. We leverage foundation models and generative priors to build robust, generalizable techniques.

Relevant Publications

- ScribbleLight: Single Image Indoor Relighting with Scribbles [CVPR'25]

- GaNI: Global and Near Field Illumination Aware Neural Inverse Rendering [Arxiv'24]

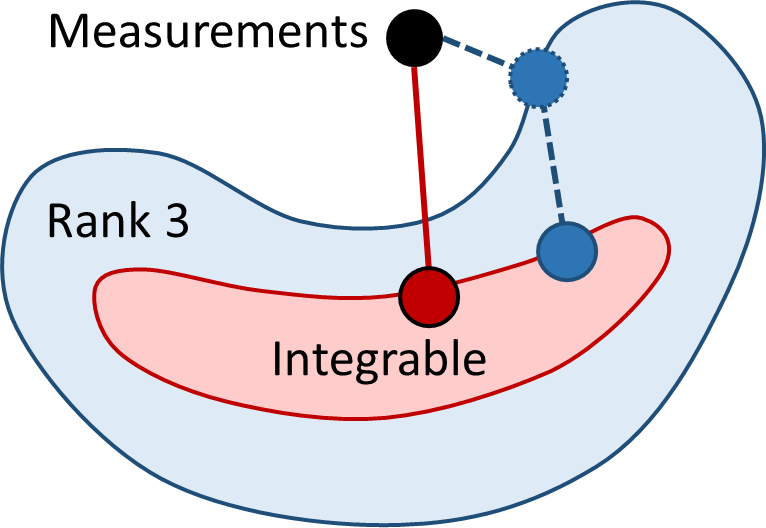

- MVPSNet: Fast Generalizable Multi-view Photometric Stereo [ICCV'23]

- SfSNet: Learning Shape, Reflectance and Illuminance of Faces in the Wild [CVPR'18]

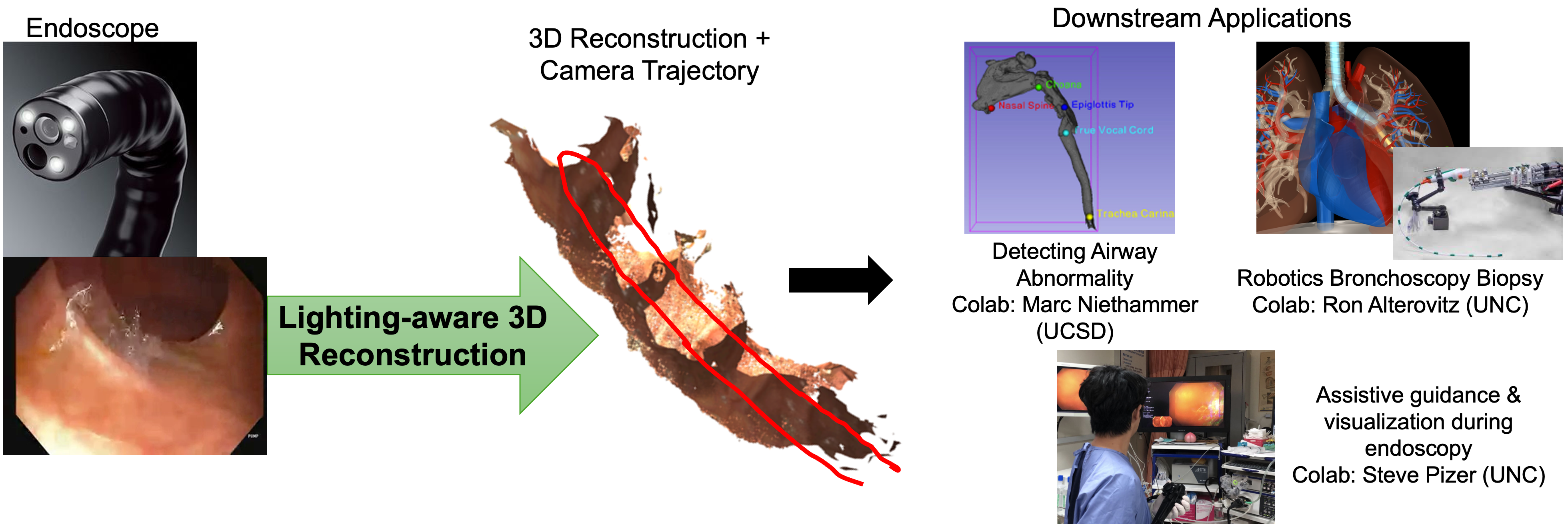

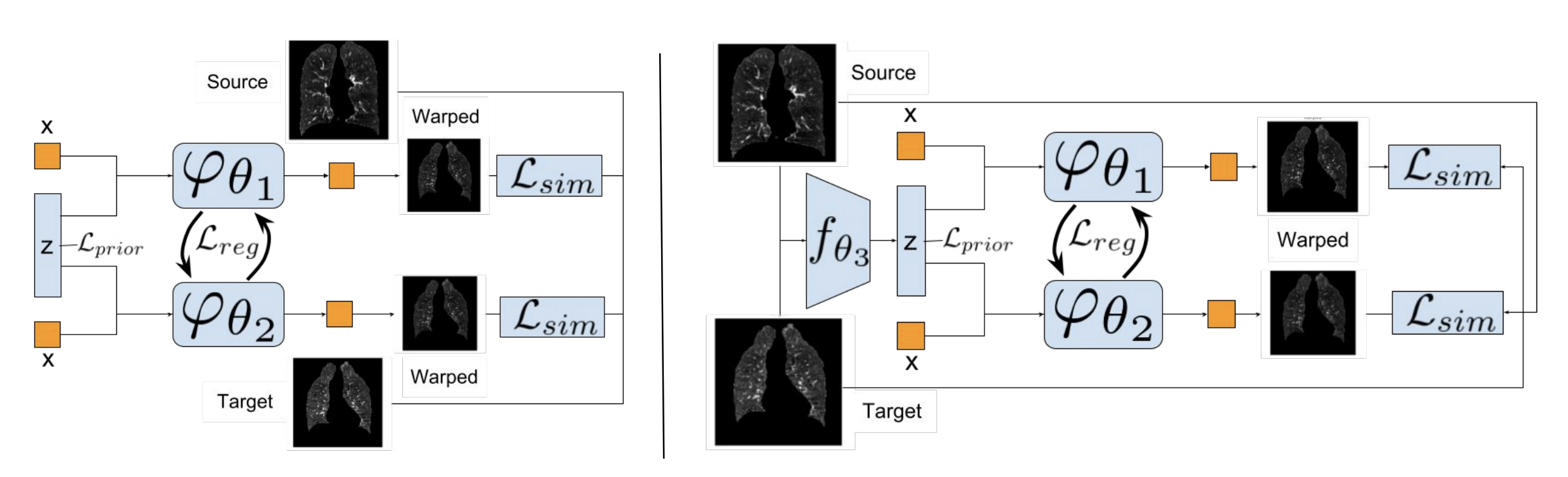

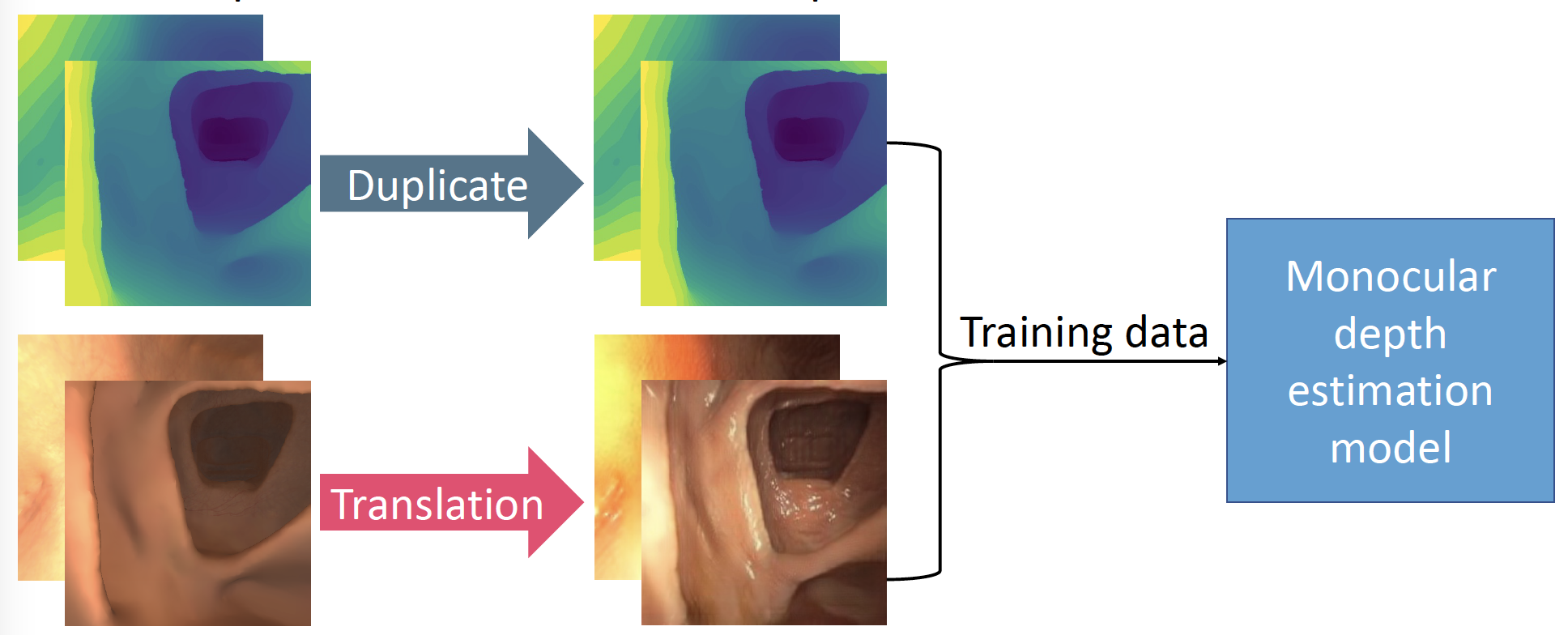

3D Perception from Endoscopy

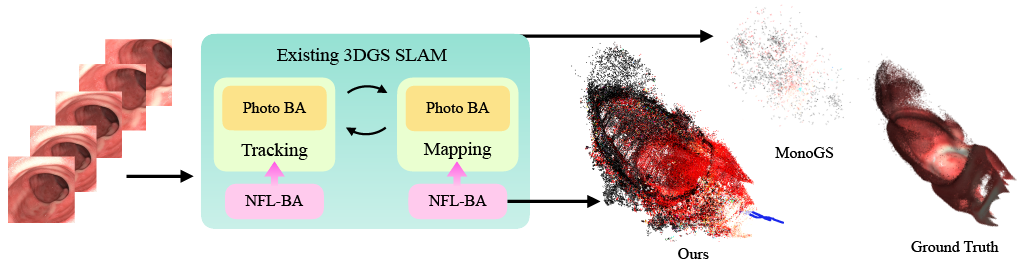

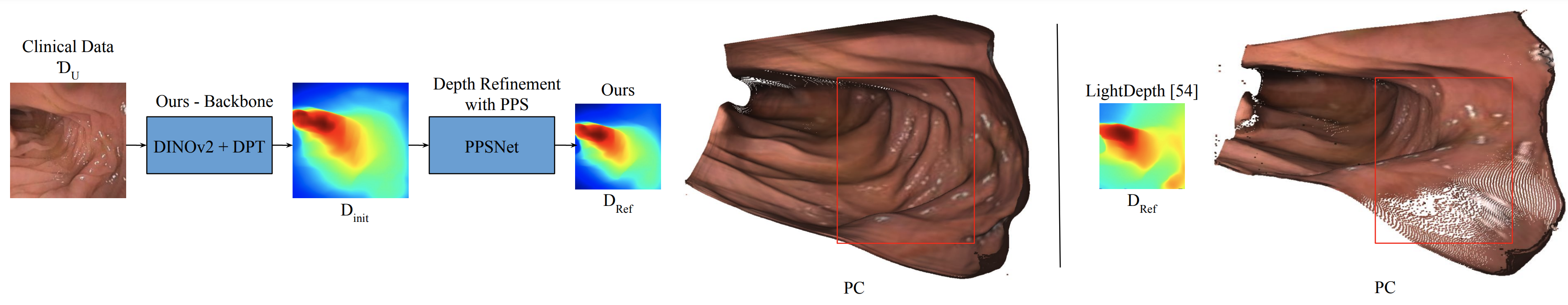

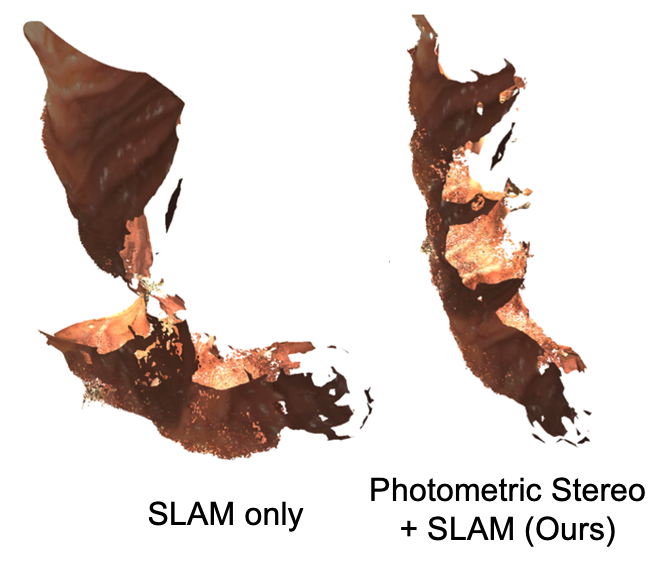

3D perception in endoscopy unlocks critical applications in medical imaging: automated measurement of organ geometry, enhanced visualization for diagnosis, and guidance for robotic surgery. The task is extremely challenging due to complex lighting effects — near-field illumination, global light transport, specular highlights, and subsurface scattering. We focus on monocular depth estimation and SLAM methods by explicitly modeling light propagation, in close collaboration with experts in medical imaging, robotics, gastroenterology, and otolaryngology.

Relevant Publications

Inverse Physics

Inverse physics involves recovering an object's 3D geometry and physical properties — such as material stiffness or initial force conditions — from sparse or single-view videos. This task is highly ill-posed and requires careful optimization. Our research addresses these challenges by designing effective optimization techniques and learning-based priors that improve inverse estimation under limited observations.

Relevant Publications

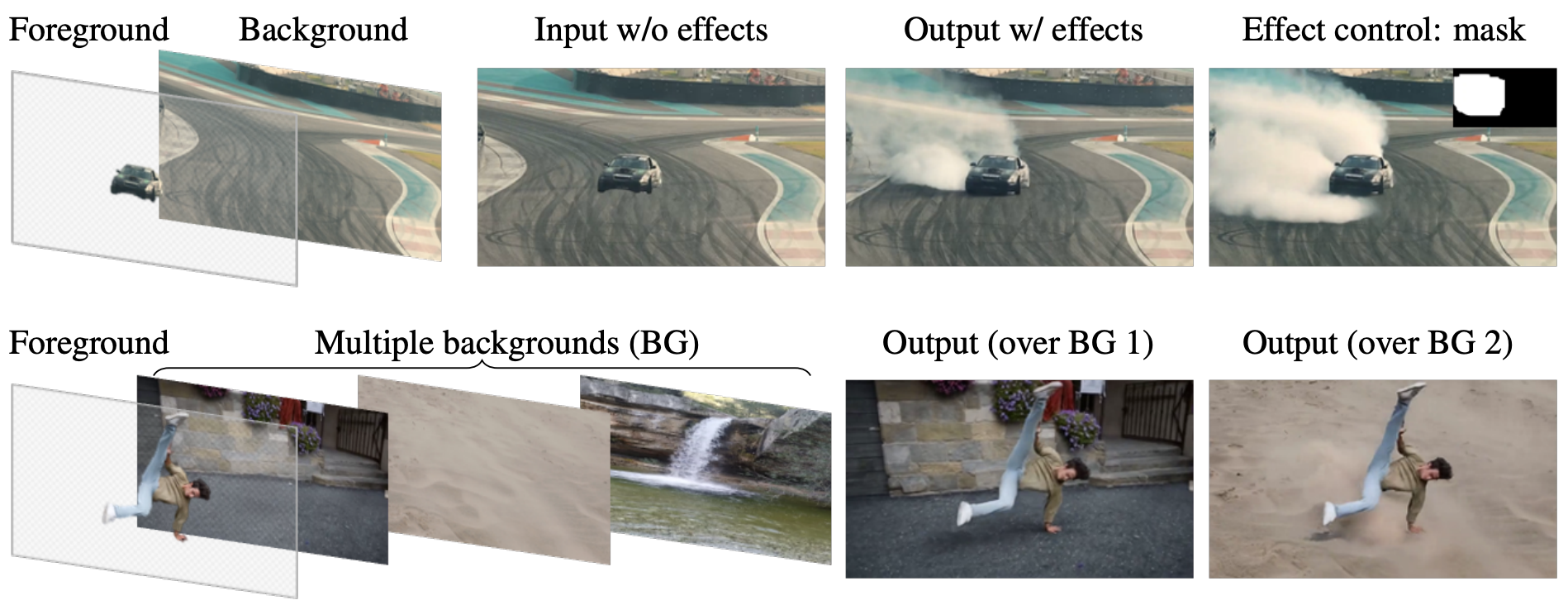

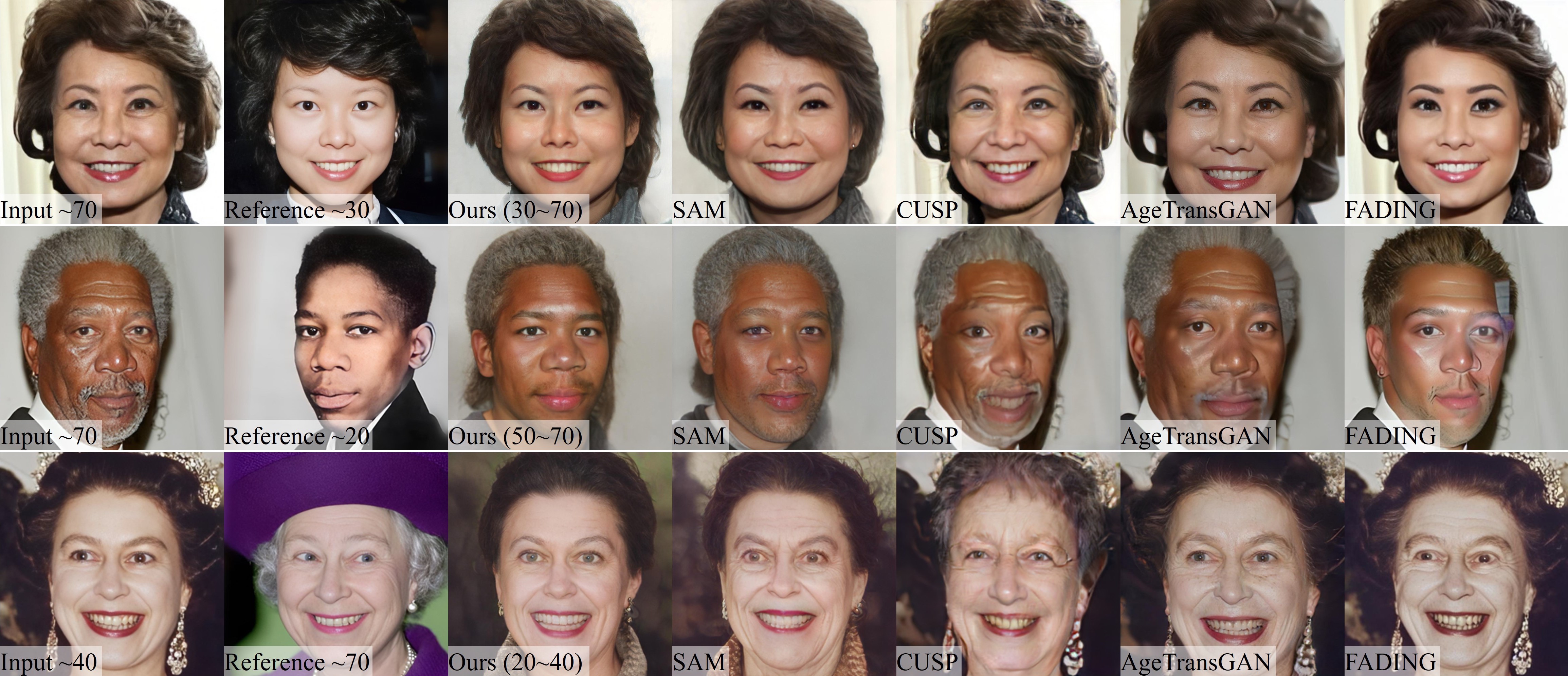

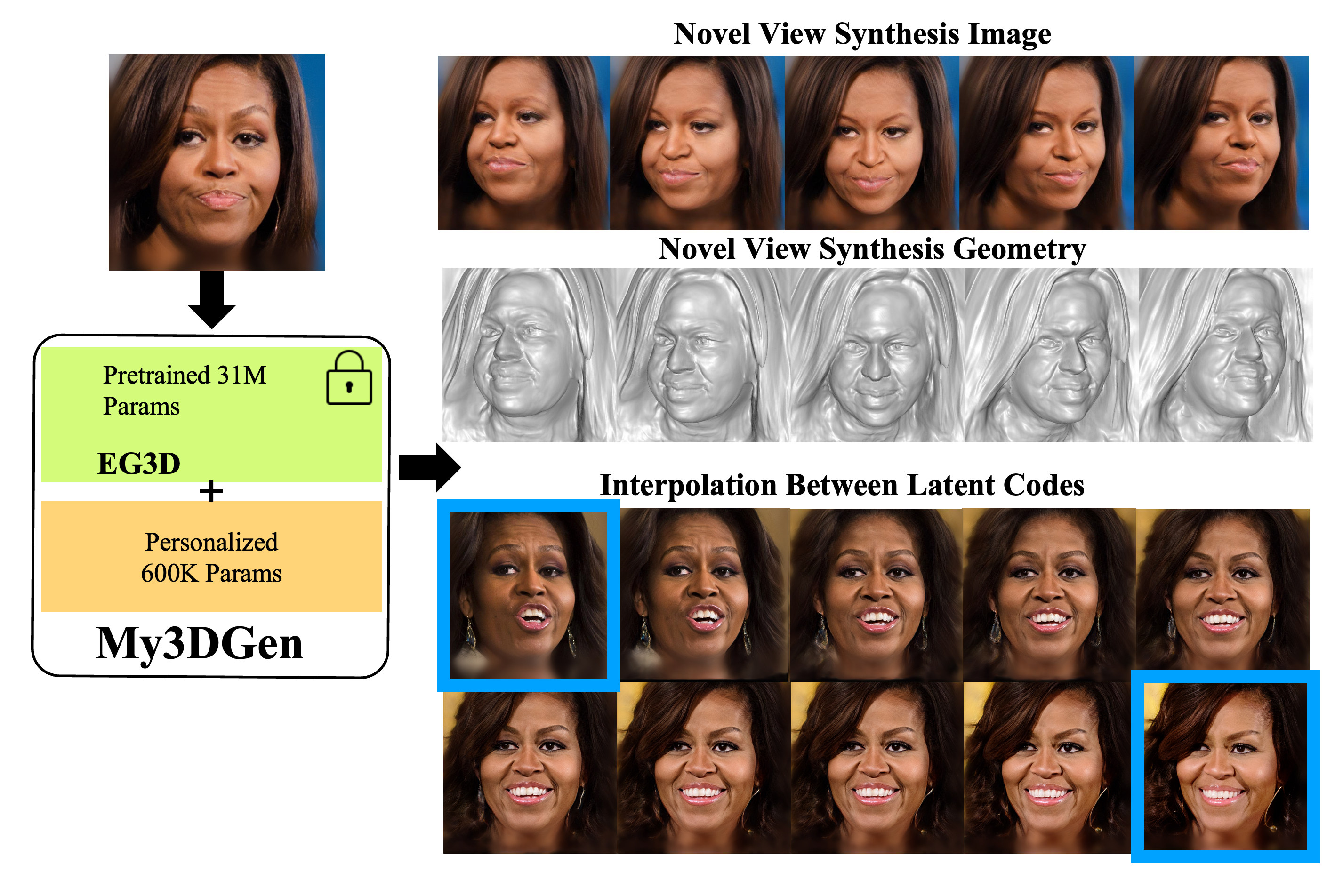

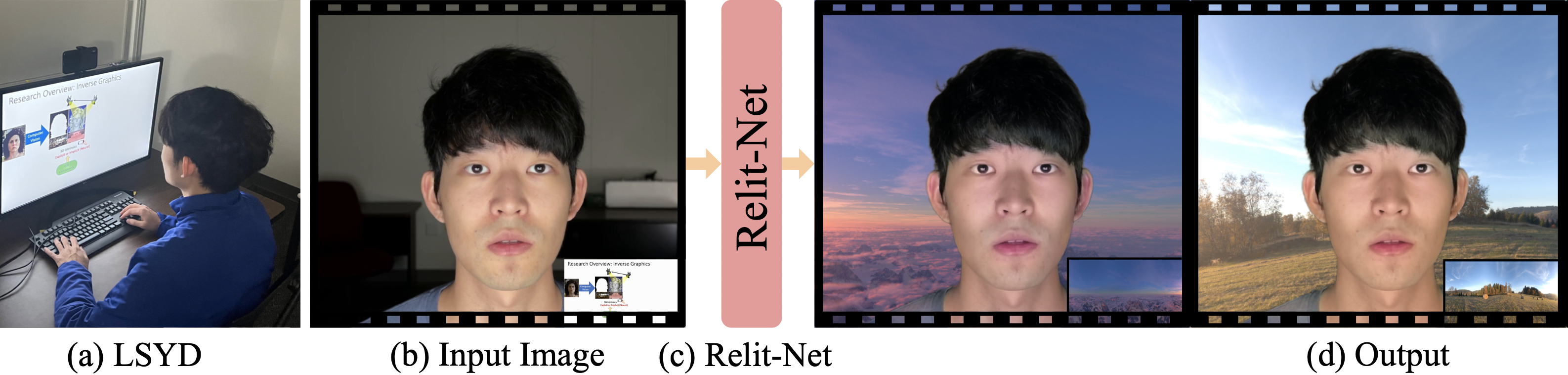

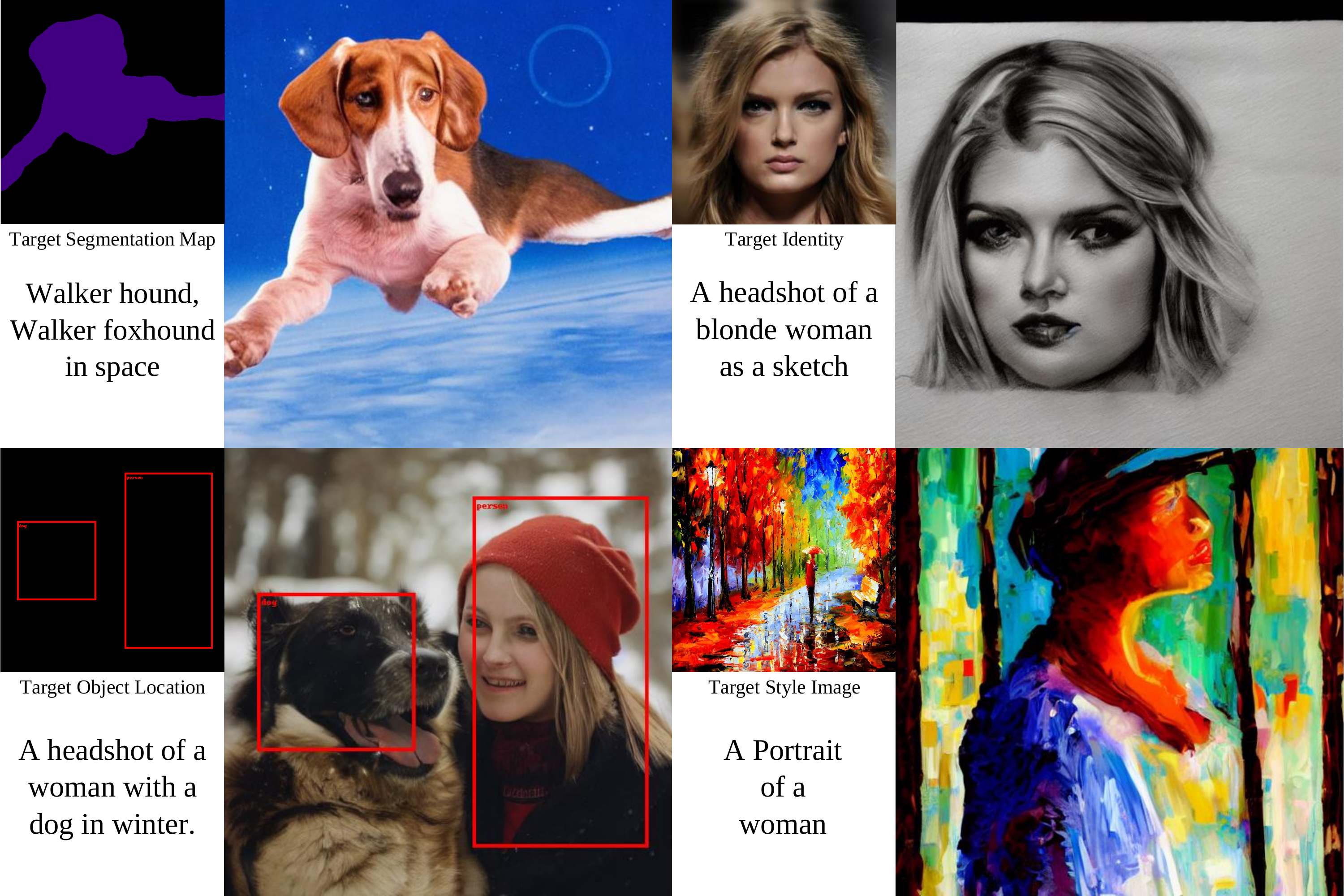

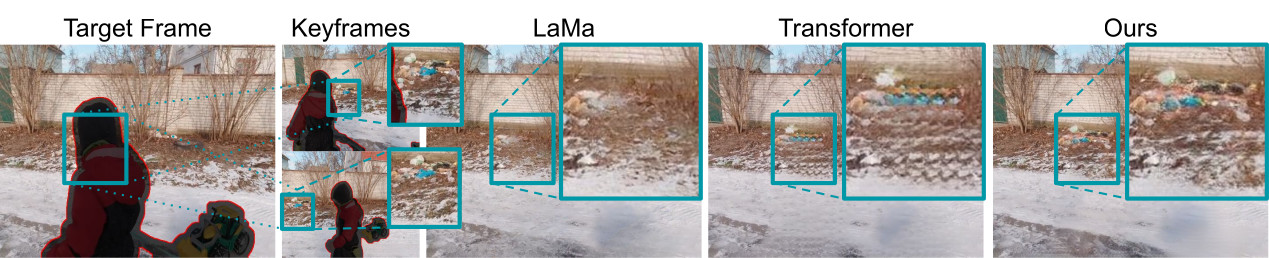

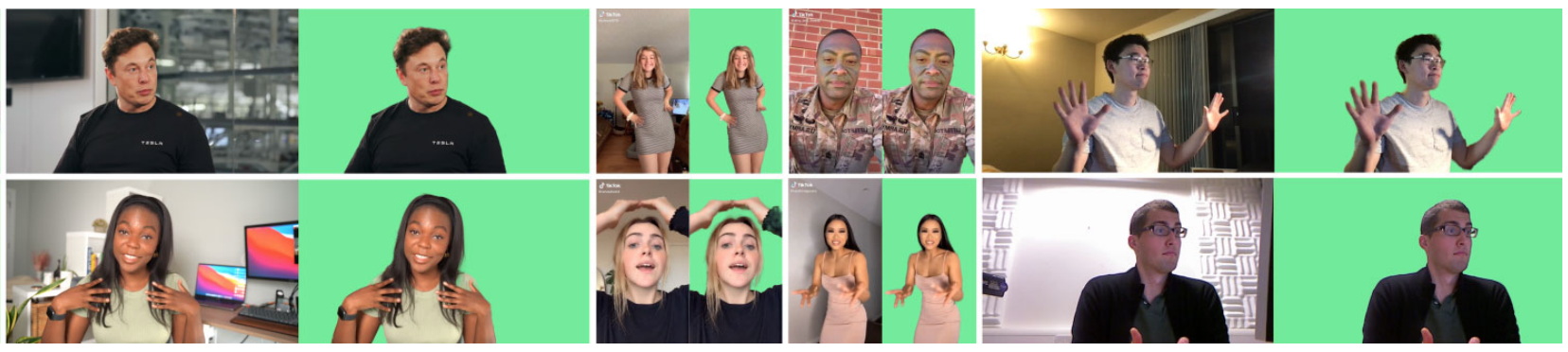

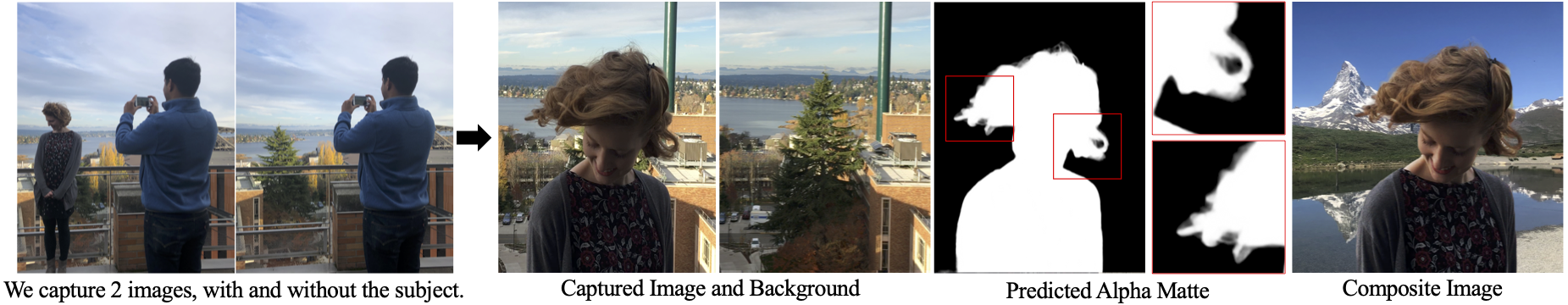

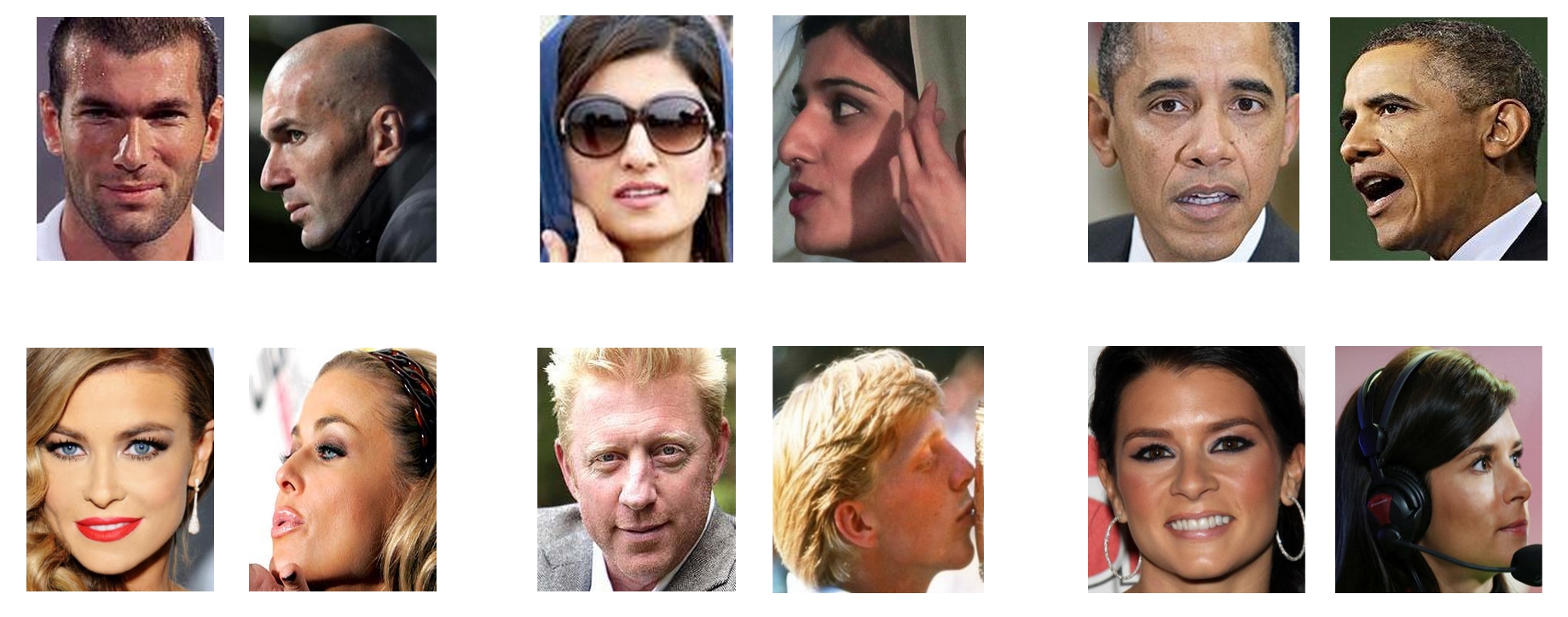

Generative Editing

We explore generative model-based approaches to high-quality facial attribute editing, including aging/de-aging, relighting, harmonization, and identity-preserving modifications for visual effects and creative applications. Our focus is on developing personalized, training-free methods that resolve the long-standing trade-off between inversion accuracy and editability in generative image editing frameworks.

Relevant Publications

We are grateful for the generous support from our sponsors.

Publications

Team

PhD Students

MS Students

Undergraduate Students

Alumni

Former PhD & MS Students

Former Undergraduates

- Amisha Wadhwa (BS)

- Sarathy Selvam (BS)

- Qingyuan Fan (BS)

- Pierre-Nicolas Perrin (BS–MS) → Capital One

- Yulu Pan (BS) → PhD at UNC

- Max Christman (BS) → MS at UNC

- Andrey Ryabstev (BS–MS, UW) → Google

- Peter Lin (BS–MS, UW) → ByteDance

- Jackson Stokes (BS–MS, UW) → Google

- Peter Michael (BS–MS, UW) → PhD at Cornell University

Teaching

- COMP 590 Introduction to Computer Vision (Undergraduate focused) Fall 2024 Fall 2025

- COMP 790 3D Generative Model Spring 2024

- COMP 776/590 Computer Vision in 3D World (Graduate focused) Spring 2023 Fall 2023 Spring 2025 Spring 2026

- COMP 790/590 Neural Rendering Fall 2022

Lab Photos