My Papers & Projects

2014

Abstract:

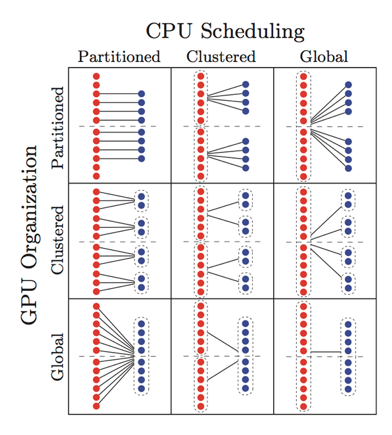

Motivated by computational capacity and power efficiency, techniques for integrating graphics processing units (GPUs) into real-time systems have become an active area of research. While much of this work has focused on single- GPU systems, multiple GPUs may be used for further benefits. Similar to CPUs in multiprocessor systems, GPUs in multi-GPU systems may be managed using partitioned, clustered, or global methods, independent of CPU organization. This gives rise to many combinations of CPU/GPU organizational methods that, when combined with additional GPU management options, results in thousands of “reasonable” configuration choices. In this paper, we explore real-time schedulability of several categories of configurations for multiprocessor, multi-GPU systems that are possible under GPUSync, a recently proposed highly configurable real-time GPU management framework. Our analysis includes the careful consideration of GPU-related overheads. We show system configuration strongly affects realtime schedulability. We also identify which configurations offer the best schedulability in order to guide the implementation of GPU-based real-time systems and future research.

G. Elliott and J. Anderson, "Exploring the Multitude of Real-Time Multi-GPU Configurations." Proceedings of the 36th Real-Time Systems Symposium (RTSS), December 2014 (to appear).

Copy of paper available here.

Source code here.

Exploring the Multitude of Real-Time Multi-GPU Configurations

5/23/14

A depiction of the high-level multi-CPU / multi-GPU configurations we evaluate in this paper for a 12-core, 8-GPU, platform. Within each box, individual CPUs (red) and GPUs (blue) are shown on the left and right, respectively. Dashed boxes delineate CPU and GPU clusters (no boxes are used in partitioned cases). The horizontal dashed line across each box denotes the NUMA boundary of the system.