UNC Laparoscopic Visualization Research

Augmented Reality Technology

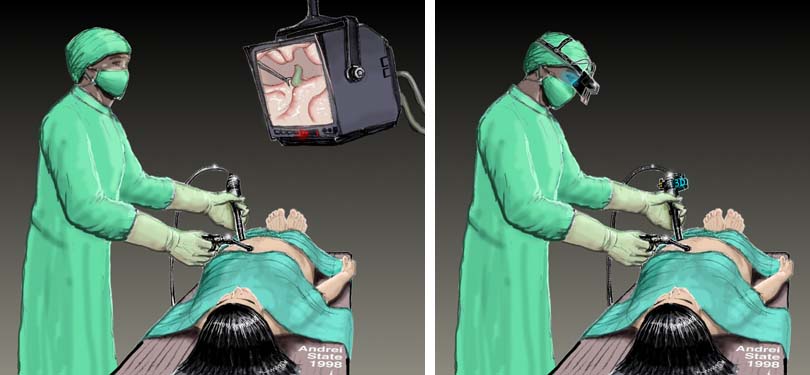

Emerging augmented reality (AR) technologies have the potential to bring the direct visualization advantage of open surgery back to its minimally invasive counterparts. They can augment the physician's view of his surroundings with information gathered from imaging and optical sources, and can allow the physician to move arbitrarily around the patient while looking into the patient. A physician might be able, for example, to see the exact location of a lesion on a patient's liver, in three-dimensions and within the patient, without making a single incision. A laparoscopic surgeon may be able to view the pneumoperitoneum from any angle merely by turning his head in that direction, without needing to physically adjust the endoscopic camera. Augmented reality may be able to free the surgeon from the technical limitations of his imaging and visualization equipment, thus recapturing the physical simplicity of open surgery.

We are on a quest to see whether this new combination of fusing computer images with the real-world surroundings will be of use to the physician. We have worked since 1992 with AR visualization of ultrasound imagery. Our first systems were used for passive obstetrics examinations. We moved to ultrasound-guided needle biopsies and cyst aspirations in April of 1995.

The third application for our system is laparoscopy visualization. We modified our ultrasound AR system to acquire and represent the additional information required for a laparoscopic view into the body.

The surgeon's view is limited to only what is directly in front of the scope. He must frequently readjust the camera, which requires much coordination with the assistant. A surgeon may opt to operate the camera himself to reduce the discoordination of the actual view with the desired view, but that limits him to only one hand with which to operate. Fixed camera holders can be used, but the view is then considerably limited; this is potentially dangerous due to the possible presence of delicate structures outside the viewing field.

Since the camera will not necessarily face the direction in which the surgeon is facing, the instruments' on-screen movements will not be the same as the surgeon's hand movements. It requires a great amount of training for a surgeon to become adjusted to this disparity.

The two-dimensional video displays that are typically used lack depth information. The surgeon can only estimate the distance of structures by moving the camera laterally or by physically probing the structures to gauge their depth. Stereo endoscopes are reported to improve a surgeon's performance, but he is still limited to viewing only what is directly in front of the camera.

We plan to address these problems by developing technology to automatically extract depth information and geometry of structures within the pneumoperitoneum. Using this information, the system will be able to construct new active views of the target structures, from angles other than the one in which the camera is facing. This will allow the surgeon to see different three-dimensional views of the pneumoperitoneum without having to physically adjust the endoscope and enabling the physician to use head-motion parallax. By always displaying the operating field from the surgeon's point of view, the disparity between his hand movements and the perceived movement of the instruments is eliminated, thereby improving hand-eye coordination.

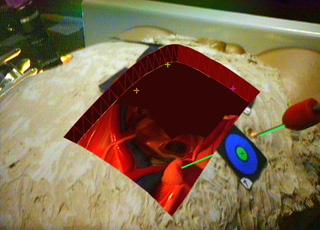

We conducted an initial experiment with a commercial anatomic training model. A snapshot of the physician's view of the model is at the top of this page. Using this system, our physician collaborator (Anthony A. Meyer, MD) successfully pierced a small foam target inside the abdominal cavity of the model guided by our prototype system. For this experiment, the laparoscopic camera was mounted statically and the 3D geometry inside the model was scanned pre-operatively. This experiment showed the importance of extracting the internal surface geometry in real-time, using depth extraction methods. The results were encouraging, emphasizing the potential value for the physician to have a natural view of the intervention area from his own physical vantage point, rather than a view constrained by the placement of the laparoscope.

A paper describing our prototype system has been accepted to MICCAI 98. The title, authors, and abstract are below. Some technical details about the AR laparoscopic visualization system are available.

|

Augmented Reality Visualization for Laparoscopic Surgery

Henry Fuchs 1, Mark A. Livingston 1, Ramesh Raskar 1, D'nardo Colucci 1, Kurtis Keller 1, Andrei State 1, Jessica R. Crawford 1, Paul Rademacher 1, Samuel H. Drake 3, Anthony A. Meyer, MD 2

1 Department of Computer Science,

University of North Carolina at Chapel Hill

AbstractWe present the design and a prototype implementation of a three-dimensional visualization system to assist with laparoscopic surgical procedures. The system uses 3D visualization, depth extraction from laparoscopic images, and six degree-of-freedom head and laparoscope tracking to display a merged real and synthetic image in the surgeon's video-see-through head-mounted display. We also introduce a custom design for this display. A digital light projector, a camera, and a conventional laparoscope create a prototype 3D laparoscope that can extract depth and video imagery.Such a system can restore the physician's natural point of view and head motion parallax that are used to understand the 3D structure during open surgery. These cues are not available in conventional laparoscopic surgery due to the displacement of the laparoscopic camera from the physician's viewpoint. The system can also display multiple laparoscopic range imaging data sets to widen the effective field of view of the device. These data sets can be displayed in true 3D and registered to the exterior anatomy of the patient. Much work remains to realize a clinically useful system, notably in the acquisition speed, reconstruction, and registration of the 3D imagery. |

Mail Andrei State for more info