|

Shahriar Nirjon Associate Professor

|

Funded Projects

|

Audio Privacy: We study the overhearing problem of continuous acoustic sensing devices such as Amazon Echo and Google Home, and develop a smart cover that mitigates personal or contextual information leakage due to the presence of unwanted sound sources in the acoustic environment. Supported by: NSF, 3 yr, 10/2018 - 9/2021, $252K, Role: Lead PI with Co-PI Jiang (Columbia). |

|

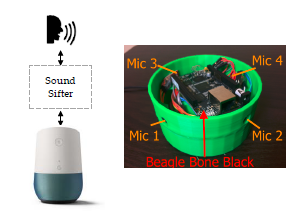

Pedestrian Safety: A wearable head-set that combines four MEMS microphones, signal processing and feature extraction electronics, and machine learning classifiers running on a smartphone to help detect and localize imminent dangers such as approaching cars in real-time, and warns inattentive pedestrians. Supported by: NSF, 4 yr, 6/2017 - 5/2021, $320K, Co-PI with PI Jiang (Columbia). |

|

IoT Data Privacy: This project takes a data-driven approach to understand the relationships among all IoT devices in a smart-home environment. Given two IoT devices, the goal is to devise algorithms that automatically identify correlations in their data and inference streams, for a given private activity context. Supported by: NCDS Data Fellowship, 1 yr, 7/2016 - 6/2017, $50K, Role: Lead PI. |

|

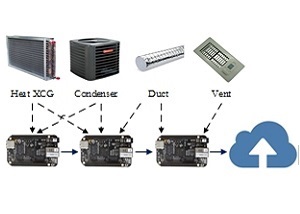

HVAC Acoustic Fingerprinting: A low-cost, distributed acoustic sensing platform and a combination of unsupervised and supervised machine learning techniques with a human-in-the-loop are employed to discover, learn, identify, and predict HVAC faults and failures. Supported by: NSF EAGER, 1 yr, 3/2016 - 2/2017, $20K, Co-PI with PI Srinivasan (UFL). |

Active and Exploratory Projects

|

3D Experience Capture: The Glimpse.3D is a low-cost stereo bodycam that captures, processes, and wirelessly transmits 3D visual information (point clouds) in real-time for viewing on a smartphone. It controls image data acquisition and image processing stages to guarantee a desired quality of captured 3D information as well as the battery life. Supported by: UNC Chapel Hill, Role: PI. |

| |

Image Beacon: Being able to broadcast images from BLE beacons enables more powerful and feature rich applications than the ones supported by today's beacons. We envision that like the web has evolved from serving hypertexts to streaming multimedia contents, the natural successor of today's beacon devices would be the ones that broadcast images. Supported by: UNC Chapel Hill, Role: PI. |

|

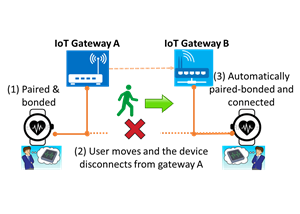

BLE Smart Gateway: BLE devices require a phone to talk to the Internet. We propose an architecture where a set of stationary BLE gateways are deployed to support seamless BLE connectivity, secure connection migration, and application-level data processing at the edge of a BLE gateway network. Supported by: UNC Computer Sciece, Role: PI. |

Selected Past Projects

|

Typing-Ring: It's a ring that enables text input into computers of different forms including PCs, smartphones, tablets, or even wearables with tiny screens. A user wears it on his middle finger and types with 3 fingers on a surface such as a table, a wall, or his lap, as if an invisible QWERTY keyboard is lying underneath his hand. Supported by: HP Labs, Role: Research Scientist. [MOBISYS'15] |

|

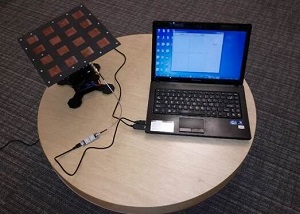

COIN-GPS: This project challenges a common belief that GPS does not work indoors. By using a steerable directional antenna, the computing power of the cloud for robust acquisitions over long signals, and combining acquisition results from different directions over time, we achieve direct GPS based indoor localization. Supported by: MSR, Role: Research Intern. [MOBISYS'14] |

|

Musical-Heart: A pair of sensor-equipped earphones detect instantaneous heart rate and activity level of a user while the user is listening to music. A biofeedback-based song recommendation algorithm then picks and plays the right music at the right moment to control his heart rate. Supported by: NSF, Role: Grad student. [SENSYS'12] |

|

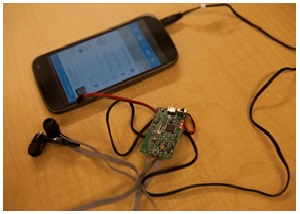

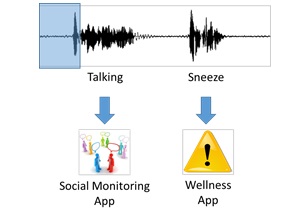

Auditeur: It's a general-purpose, energy-efficient, and context-aware acoustic event detection service for mobile devices that builds and returns optimized classifiers, given a sound type. It enables mobile app developers to have their app register for and get notified on a wide variety of short-duration acoustic events. Supported by: NSF and DGIST, Korea, Role: Grad student. [MOBISYS'13] |

|

Multi-Nets: It's a system service for mobile devices that switches wireless network interfaces dynamically, without interrupting on-going, short-lived TCP connections. The goal of switching is to use the best interface that can save energy, increase throughput, or offload more data to WiFi. Supported by: Deutsche Telekom, Role: Research Intern. [RTAS'12] [ACM TECS] |

|

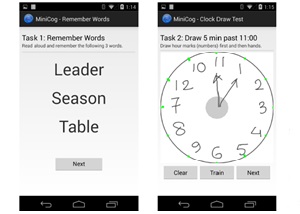

Mobi-Cog: The MOBI-COG mobile application is a complete automation of a widely used, paper-based, 3-minute dementia screening test called the Mini-Cog test, which is administered by primary caregivers for a quick screening of dementia in elderly. Supported by: NSF and PARC, Role: Grad student. [WH'14] |

|

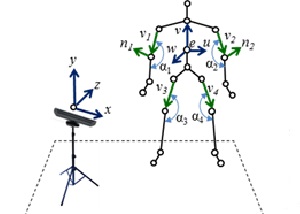

Kintense: Kintense is a robust, accurate, real-time, and evolving system for detecting aggressive actions such as hitting, kicking, pushing, and throwing from streaming 3D skeleton joint coordinates obtained from Kinect sensors. Target population for this system are the elderly who are suffering from dementia. Role: Grad student. [PerCom'14] |

|

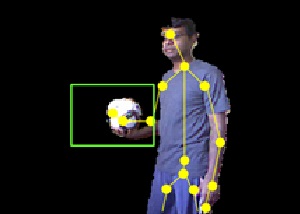

Kinsight: Kinsight localizes and tracks household objects in 3D using depth-camera sensors. It does so indirectly, i.e. by continuously tracking human skeletons, and then detecting and recognizing objects only during human-object interactions. Role: Grad student. [DCOSS'12] |